Benchmarking Stream Processing Frameworks with Bobby Evans

Podcast: Play in new window | Download

Subscribe: RSS

“Benchmarks are all crap, but there are some benchmarks that are better than others.”

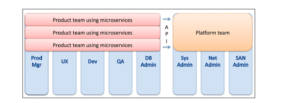

Stream processing engines are often the component of data engineering stacks with the most variety, with the big data ecosystem offering several compelling options. Assessing the differences between projects like Flink, Storm, and Spark Streaming is difficult without an agreed upon set of metrics to compare them on. Fortunately, the Yahoo engineering team created a set of benchmarks to do exactly this. On today’s episode, Jeff and Bobby compare streaming frameworks through the performance on several of Yahoo’s benchmarking tests.

Bobby Evans is an architect at Yahoo working on streaming frameworks, primarily on Apache Storm. He is also the Apache Storm Incubating PMC at The Apache Software Foundation.

Questions

- Is experimentation better than a research based approach when benchmarking?

- What are the set of requirements for every streaming platform?

- What did you expect to be the most time intensive computation along your benchmark pipeline?

- What is the big draw of Apache Flink?

- Will we all want to do some form of batch processing, or will it become a relic of the past?

- What were the conclusions you drew from your benchmark tests?

- How will the world of streaming frameworks evolve going into the future?

Links

- Benchmarking Streaming Computation Engines at Yahoo!

- Microbatching

- Large Hadron Collider

- Distributed Cache

- Bobby on Twitter